AI Is Changing Software Engineering! — I Had to Experience It Firsthand

AI has drastically changed software engineering over the last year. Tasks like coding one line at a time are no longer necessary. Having teams dedicated solely to rapidly generating UI/UX designs is becoming less essential. Generating detailed business requirements, user stories, and test scripts is now faster and easier than ever. Testing and quality assurance—along with traceability back to requirements—are increasingly automated.

After a hiatus of about a decade from actual coding and hands-on development, I decided to jump back in neck-deep to see how AI could change the software development process.

About ten years ago, I started a life sciences–adjacent application development company called Energize Technologies. The goal was to create a visual analysis triathlon coaching application called CommuniTri where athletes could upload videos of themselves swimming, biking, and running and receive feedback and coaching on their technique. The longer-term vision was to pair coaches with athletes to analyze videos and develop personalized training plans, and eventually, after accumulating enough data, to build AI algorithms that could automatically analyze form and provide immediate feedback.

I built a team and spent about six months developing the application. Eventually, I scrapped the project because another, better opportunity came along. In hindsight, I may have enjoyed the process of building the application more than the idea of selling and marketing it. But I digress.

During those six-plus months, I had to write a long business requirements document, create architecture diagrams and data models, work with a UI/UX designer to develop user stories, wireframes, and high-fidelity mockups, hire developers to build the application, and then bring in a QA tester to generate test scripts and test each release. Let’s just say—it was a process.

Fast forward to about a week ago. I decided to see how much the software engineering space had changed over the last six months. I had been sitting on an idea for a family culture and incentive application for about a year and decided to try building it. I knew I didn’t have the time or energy to go through the same lengthy process I had gone through ten years earlier, but I figured I would give AI-driven software engineering a try to see how powerful it really was.

And honestly, I was blown away.

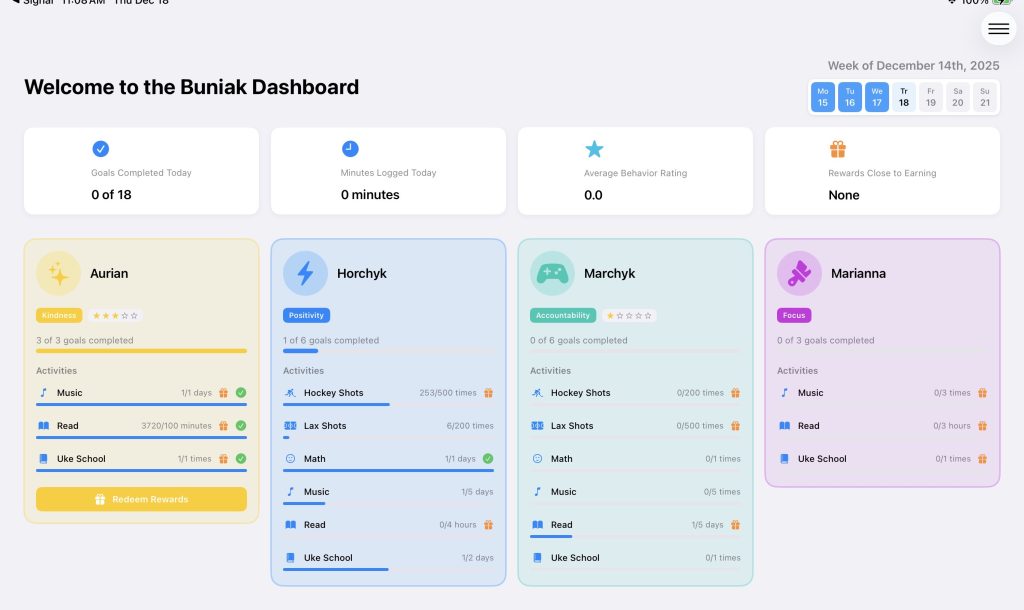

For context, over the last year and a half I’ve had a 3×4-foot whiteboard hanging on my kitchen wall. On it are the names of all four family members—me, my wife, and my two sons, who are nine and twelve. Next to each name are the activities that person needs to complete: practice piano, take 100 lacrosse shots, read for 30 minutes, clean their room, do extra math homework, and so on. When someone completes a task, they mark it off.

Each person also has a “word of the week” to think about. For example, one of my sons was struggling with accountability, so his word of the week was ownership. At dinner—either nightly or at least on Sunday evenings—we talk about how each of us did relative to our word of the week. It creates a shared growth mindset and holds us accountable to one another. We also review whether weekly goals were completed.

I created an incentive plan where, if all goals were achieved, the family member earned a small reward, like $5 at the end of the week. This not only provided motivation, but also taught concepts like saving money and earning interest.

I decided to turn this concept into an iPad application.

About a week and a half ago, I opened ChatGPT and typed:

“Please write up a business requirements document and an architecture for the following requirements.”

I then wrote about 718 words—less than a page of single-spaced text—describing the idea, the rules, and how I wanted the app to work. There were no formally numbered requirements, just concepts and sentences, very similar to what I’ve written here.

Two minutes later, I had a fully generated business requirements document. It described the product vision, short- and long-term objectives, user types, detailed functional requirements, business rules, a high-level system architecture, a full tech stack, and a data model. After about an hour of back-and-forth refinement, it was extremely well thought out and largely correct.

I then took that output and pasted it into Cursor AI. It probably took me about two hours to set up Cursor, Xcode, iCloud services, and Firebase—mostly because I hadn’t done any of this in ten years.

I wanted to see how well Cursor could build the application on its own.

Over the next 15–20 minutes, I watched Cursor break down requirements and generate code in real time. I was absolutely aghast watching it translate requirements directly into working code. Then came the moment of truth—I compiled and ran the application.

About 20 errors were thrown in Xcode, and the app didn’t run. I assumed I’d have to copy each error into Google or Stack Overflow and debug everything manually. Instead, I copied the error messages directly into the Cursor AI chat.

It fixed every single one.

It took about an hour to resolve all the issues, but eventually the application compiled and ran successfully. Four hours in, I had a working prototype.

Of course, although the application ran, it was nowhere near production-ready. I had to go screen by screen, telling Cursor exactly what I wanted. I changed fields, modified the data model, adjusted layouts, redesigned user flows, and reworked parts of the architecture. After another 8–10 hours of refining everything through the chat interface, I decided to scrap the entire project and start over.

This time, instead of feeding Cursor my entire ChatGPT-generated requirements document, I started smaller and built progressively. I gave it a high-level concept, defined the users, outlined the screens, and provided a basic data model. Cursor built the framework of the app, and then I spent about 40 hours systematically working through each screen.

I started with authentication and security. Then I built an administrative backend to manage children, activities, and goals. Cursor required a lot of direction around user flows and design, so I often jumped back into ChatGPT, asked it to generate a high-fidelity mockup, and then brought that into Cursor to implement.

This became a highly interactive process of tweaking, debugging, and prototyping. Instead of waiting for a two-week sprint, I could see changes in minutes. If I didn’t like something, I typed “revert back” and tried a new approach.

I found myself relying on everything I learned in computer science—data normalization, entity relationships, and system design—but instead of talking to a data architect, designer, or developer, I was having those conversations with AI. Often, it didn’t fully understand what I was trying to accomplish, which required significant back-and-forth. I also found myself diving into the code directly.

That’s when I noticed something concerning.

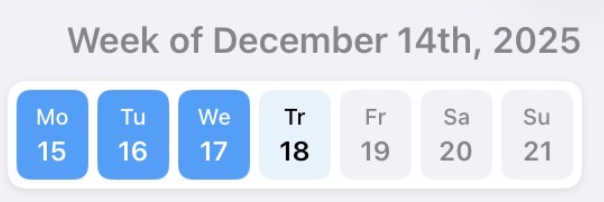

About 30 hours in, I discovered that some of the logic—specifically around date calculations—was fundamentally wrong. I had asked Cursor to display seven days of the week, darken past days, highlight the current day, and keep future days transparent. The app thought it was Tuesday when it was actually Thursday.

After digging in, I realized the application was using a counter instead of real dates. The week always started on Monday and incremented forward, regardless of when the account was created. The logic worked, but it was conceptually wrong. All weekly calculations, analytics, dashboards, and future AI insights were built on that flawed assumption.

A trained software engineer likely would have caught this much earlier. Cursor AI didn’t understand why the logic was wrong—it only knew that it executed. Fixing this sent me down hours of re-engineering.

In regulated environments like Life Sciences, especially with GxP-developed systems, this would never fly. Trusting AI-generated code without deep understanding could easily lead to serious downstream data quality issues.

Long story short, AI-driven software engineering is incredible. It is fast, powerful, and remarkably effective for prototyping and rapid iteration, and it will only continue to improve. But it is still very young.

There are real risks associated with using these tools without experienced software engineers and architects guiding the process. Those risks are amplified in regulated industries like Life Sciences, where data provenance, traceability, and quality are non-negotiable. Cybersecurity is another major concern. When you are not reviewing every line of code, vulnerabilities can easily slip through. While AI-driven security tools can help, they still lack the creativity and intuition of a human attacker.

AI can generate screens and user flows, but it cannot reliably predict how real humans will actually use an application. I repeatedly asked AI to optimize user flows and reduce clicks, only to receive solutions that looked reasonable on paper but fell apart in practice. Human judgment still matters.

Scalability is another unresolved issue. More than once, I saw data models and logic that worked for a prototype but would fail at scale. In one case, reward types were hard-coded as monetary only. A human engineer would naturally anticipate future flexibility, but AI tends to optimize narrowly for the immediate requirement. Identifying and fixing those issues required careful review and hours of rework.

The takeaway for me is clear. AI is not replacing software engineering—it is changing the role of the software engineer. The work shifts away from typing code line by line and toward system design, logic validation, data modeling, security, and quality assurance. For the foreseeable future, especially in enterprise and Life Sciences environments, successful software development will require humans and AI working together.

AI dramatically lowers the cost and time required to build software. But understanding what you are building, why you are building it, and whether it is correct still requires human expertise. Used thoughtfully, AI is a force multiplier. Used blindly, it can quietly introduce risk.

Software engineering isn’t disappearing.

It’s being redefined—and we’re only at the beginning.

P.S. Keep an eye out for the application as it’s coming out before the New Year!